The Decisioning Delusion

By Henry Hernandez Reveron

Cheap decisions, expensive consequences, and the architectural test the industry forgot

MarTech has a new (renewed?) obsession: Decisioning.

Every vendor has it. Every analyst is elevating it. Every practitioner seems ready to make it the new center of gravity for customer engagement. Adobe describes Decisioning as a centralized catalog of decision items plus an engine that uses rules and ranking to select the most relevant item for each individual. Pega describes Customer Decision Hub as an “always-on brain” that unifies data, analytics, and channels to predict needs in real time and deliver hyper-personalized next best actions at scale. CDP Institute’s AI Decisioning framing goes further, presenting AI-Decisioning as a new layer that can optimize every touchpoint without rigid segmentation or static rules.

That story is seductive. It is also incomplete.

Decisioning is real. It is useful. In some environments, it is transformative. But the market is making a category error and an economic one at the same time. It is mistaking a capability for an architecture, and it is mistaking computational abundance for strategic sustainability.

That is the delusion.

The issue is not whether Decisioning works. The issue is whether the industry is inflating it into something it cannot actually be: the governing abstraction for customer technology. It is not. Decisioning is a bounded capability inside a larger engagement system. And the clearest way to see that is to ask a question most of the current discourse barely asks:

What happens when the decision was wrong?

Not the score.

Not the model output.

Not the campaign metric.

The state. The consequence. The downstream effects. The customer reality.

That is the reversibility question. And once it is asked, much of the industry’s Decisioning rhetoric starts to look thin.

What Decisioning is, before the hype got to it

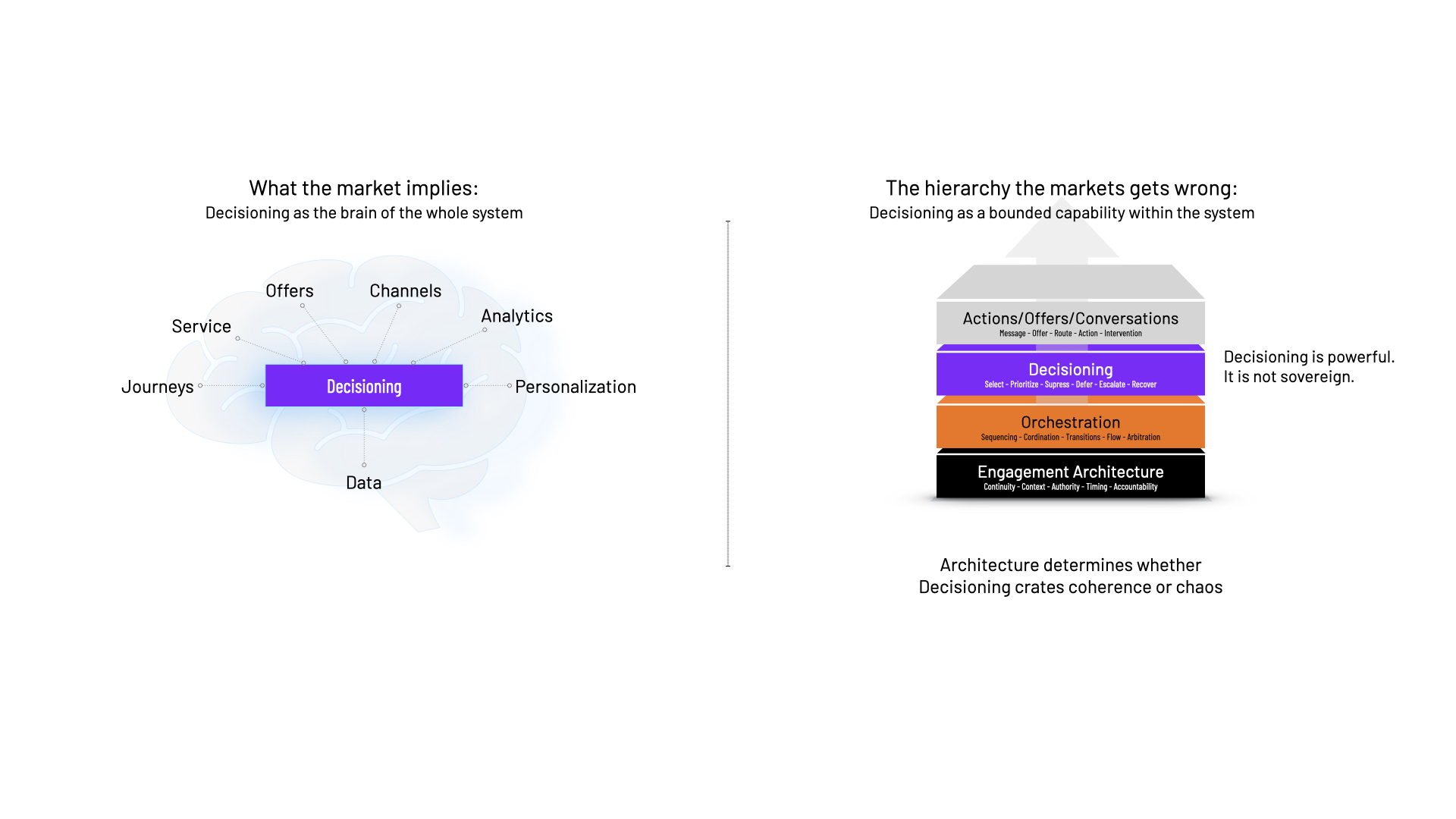

At its most useful, Decisioning is the capability that selects, prioritizes, suppresses, defers, or escalates actions, offers, content, or treatments for a given context under explicit rules, constraints, and ranking logic. Adobe’s documentation is clear on that point: Decisioning is about a centralized catalog of decision items and an engine that applies rules and rankings to determine what should be shown. Pega’s official material is similar: define business structures, implement constraints, configure prioritization, and deliver next best actions through channels.

Aarron Spinley’s The Customering Method is useful precisely because it treats that capability seriously without romanticizing it. Spinley positions complex Decisioning as the engine beneath orchestration, then places orchestration in the larger role: applying that Decisioning capability across channels, assets, touchpoints, and systems. He also makes explicit that mature Decisioning includes delay and cancellation, not just activation.

That is the right scale for the idea.

Decisioning is important. It is not sovereign.

This is not a revolution

Part of the hype comes from talking about Decisioning as if it were a newly invented category. It is not. Enterprises have long used rules, triggers, scoring, prioritization, suppression, and recommendation logic to shape customer interactions. What has changed is the cost curve, the speed, the complexity of the evaluation, and the volume of actions that can now be considered in real time.

Let me draw that contrast directly. In simpler systems, a point-in-time customer action triggers a reaction through business rules. In complex Decisioning, a larger matrix is executed in real time, involving action sets, exclusions, filtering, eligibility, and prioritization.

So no, this is not a revolution. It is an old operational function entering a more powerful computational regime.

That distinction matters because when the market forgets the lineage of a capability, it tends to exaggerate its novelty and under-examine its liabilities.

When a capability starts pretending to be the architecture

This is the central category error.

Architecture is not the act of picking the next thing to say. Architecture is the design of the system that governs continuity, state, timing, context, authority, coordination, openness, and accountability across interactions.

Decisioning operates inside that system. It cannot define the whole of it.

The Engagement Fabric defines an Engagement Layer between channels and experiences, made up of encounters plus the changing context in which they occur. Inside that layer sit multiple capabilities: identification, event capture, context definition and enrichment, rules management, recommendations and content, arbitration, orchestration, and analytics. Decision-relevant functions are present, but they are part of a broader architectural structure rather than its synonym.

The Martech Maturity Reference Framework reinforces the same point. Decisioning sits inside the Engagement OS alongside identity resolution, interaction management, journey orchestration, journey analytics, continuous intelligence, and intent reasoning. It is important, but not singular.

The CX-Led Architecture paper makes the same point in a different language. Experience context is a sliced view of the system at a given time. Experience records are chained interactions over time. Interactions may pause and resume across asynchronous moments. That is architecture: temporal, structural, systemic.

A decision engine can choose an action. Architecture determines whether the system remains coherent across actions.

The market is increasingly talking as if Decisioning were the architecture. It is not. It is a capability that depends on architecture to prevent it from becoming expensive chaos.

The trouble with next best action

No phrase has done more to popularize Decisioning than “Next Best Action.” Pega deserves credit for industrializing the phrase and turning it into a disciplined operating model. That has been genuinely useful. It has helped move the industry beyond brittle channel rules and toward more contextual forms of selection.

But the phrase also shrinks the problem.

It implies that the central challenge is to find a single best move. It assumes the system’s main job is intervention. In mature customer systems, that is often false.

Sometimes the right outcome is a suppression. Sometimes it is a service intervention. Sometimes it is a deferral. Sometimes it is silence. Sometimes the most valuable thing a company can do is stop itself.

In The Customering Method, we make clear that complex Decisioning includes delaying an action, cancelling an action, or not acting at all. And when orchestration extends that capability across all channels and behaviors, the frame changes from “Next Best Action” to “Next Best Conversation.”

That is not a cosmetic upgrade. It is a conceptual correction.

An action is one-way. A conversation is two-way. An action assumes intervention. A conversation assumes context, continuity, reciprocity, and adaptation. An action belongs naturally to campaign logic. A conversation belongs more naturally to stewardship.

That is why next-best-action is too small a question.

The real job is not selection. It is arbitration.

If there is one underappreciated word in this whole field, it is arbitration.

The most valuable thing advanced Decisioning does is not merely picking a message or offer. It is arbitrating among competing obligations, competing goals, competing contexts, and competing risks. It is resolving conflict between service and sales, between short-term lift and long-term relationship value, between local optimization and system continuity.

Arbitration is the application of static and dynamic data, live behavior, history, propensity, and contextual signals to choose the best conversation to pursue. It does not require centralizing all data, only timely access to the right data. It may trigger care actions, CRM actions, technical tickets, service follow-up, and promotion suppression when the customer context makes selling inappropriate.

That is a far more serious conception of the field than “the right message at the right time.”

It shifts Decisioning from message optimization to responsibility management.

And once it is framed that way, the next obvious question is not “what should the system do next?” It is “what should the system do when what it did was wrong?”

The test the industry forgot

This is where the current Decisioning discourse starts to crack.

Read the official vendor material. Adobe talks about centralized decision items, rules, ranking, AI-powered optimization, and delivery at scale. Pega talks about always-on brains, next best actions, constraints, prioritization, and globally optimized strategies. CDP Institute’s AI Decisioning framing talks about optimizing every touchpoint without rigid rules. The common orientation is selection, ranking, optimization, and execution.

What is missing as a first-class idea is Reversibility.

Not monitoring. Not reporting. Not governance boilerplate. Not “humans in the loop” as a slogan.

Reversibility.

What happens when a decision proves wrong, becomes stale, contaminates the state, creates downstream contradiction, or triggers actions that now need to be compensated for?

Read the leading Decisioning material, and a pattern appears quickly: plenty on choosing, very little on unwinding. The dominant emphasis is on choosing, ranking, optimizing, and scaling. Much less attention is given to correcting, compensating, and unwinding.

That omission is not small. It is the fault line.

A Decisioning System is not mature because it can choose the Next Best Action. It is mature because it can recover from the wrong one.

That is the test the industry forgot.

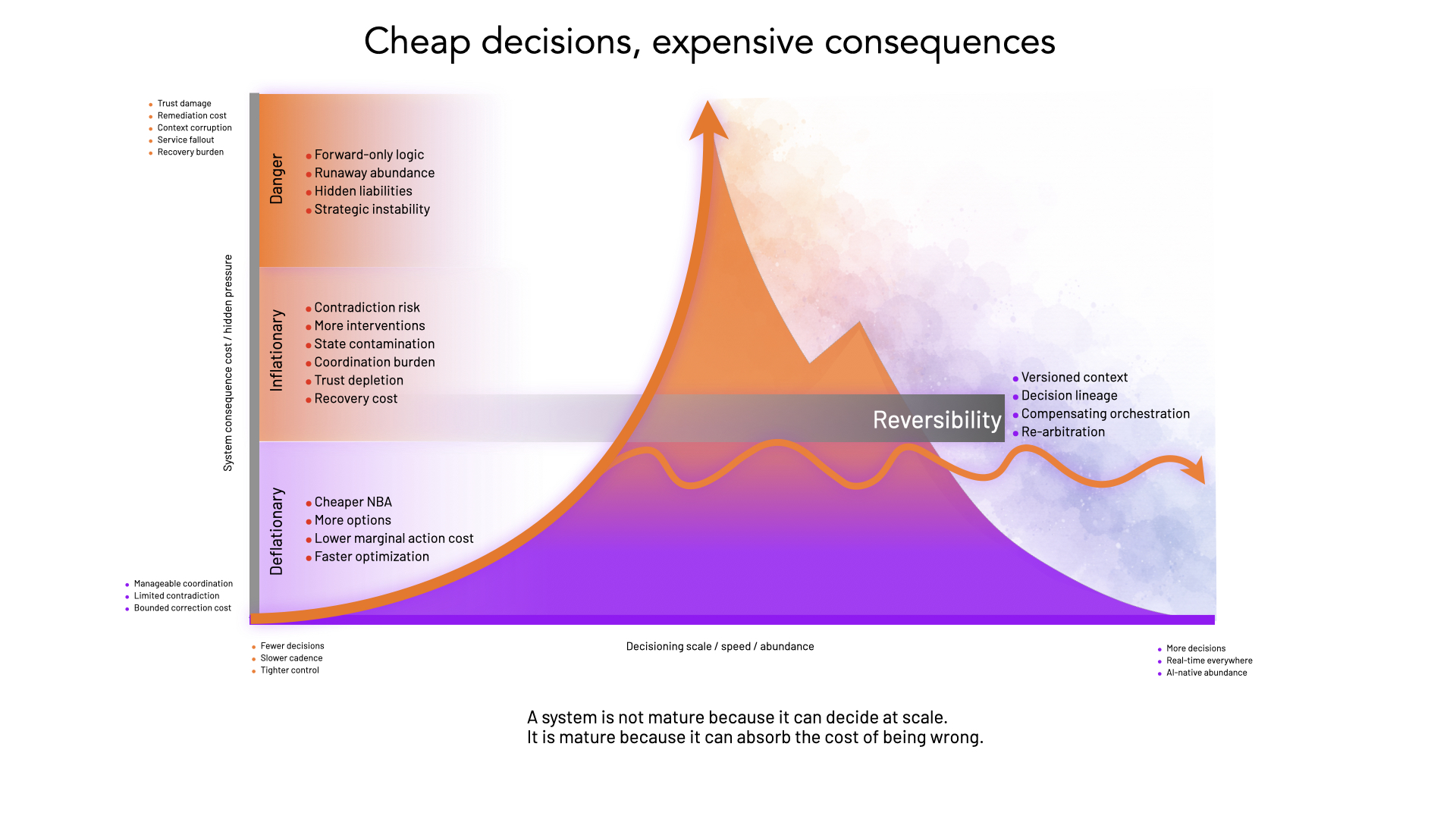

Cheap decisions, expensive consequences

This is where the economics come in, and this is where the conversation gets serious.

AI-powered Decisioning is economically deflationary at the margin. Adobe frames Decisioning as delivering personalized decisions at scale, with centralized items and AI-powered rankings. Pega frames it as an always-on decision brain that can unify data, analytics, and channels to deliver next best actions in real time across channels.

That means the marginal cost of generating a Next Best Action (NBA) is collapsing.

- Cheaper selection.

- More actions evaluated.

- More personalized messages.

- More treatments tested.

- More decisions made per customer per unit of time.

At the edge, Decisioning is deflationary.

But that is only half the story.

When the marginal cost of producing decisions approaches zero, organizations tend to produce far more of them. And that creates inflationary effects elsewhere in the system: more interventions, more decision traffic, more coordination burden, more contradiction risk, more state changes, more suppression complexity, more trust depletion, more remediation cost.

This is the economic blind spot.

The market is celebrating Decisioning as if cheaper and faster action generation were inherently good. It is not. Lower marginal cost at the point of action can lead to higher aggregate costs in coordination, error handling, service fallout, and recovery. McKinsey’s recent martech work makes the broader point that martech creates durable value only when tied to business outcomes and operating models rather than operational vanity metrics, and that many marketing leaders still struggle to credibly articulate martech ROI.

That is exactly the problem here.

Decisioning can make action cheap without making judgment cheap.

It can make options abundant without making outcomes sustainable.

That is the strategist’s correction that the current discourse badly needs.

The inflationary economics of abundance

There is a deeper economic problem under all this: abundance itself.

The current Decisioning story often treats more signals, more options, more possible actions, and more rapid optimization as inherently beneficial. But information economics is not that simple. More information creates interpretation costs, coordination costs, arbitration burdens, and diminishing returns. More options do not guarantee better outcomes. Sometimes they generate pseudo-precision, escalating noise, and growing pressure to intervene simply because the system can.

The CX-Led Architecture does not treat context as an infinite pile of signals. It treats experience context as a sliced view of the system at a given time. That is a disciplined concept: not all information, but relevant information, structured in time.

That leads to a much better question than the current market usually asks:

Is Decisioning becoming a machine for generating infinite options without a theory of informational cost, sustainability, or scalability?

That is not a rhetorical flourish. It is a strategic test.

Because once AI can score more actions against more signals more often, the limiting factor is no longer computation. The limiting factor becomes customer tolerance, organizational governance, interpretability, recovery capacity, and operating sustainability.

Instead of only asking “Can the system consider more options?”

Also, and equally important, ask “What is the cost of option abundance?”

That cost shows up in at least four places:

- Attention economics: More decisions often mean more interventions competing for finite customer attention.

- Coordination economics: more decisions mean more arbitration across channels, products, service obligations, and teams.

- Error economics: when decision volumes rise, even low error rates produce large absolute numbers of bad outcomes.

- Recovery economics: once bad decisions propagate across systems and states, the cost of correction rises non-linearly.

Function without economics is fantasy.

When AI can act but cannot recover

Now the architectural and economic arguments converge.

If AI-powered Decisioning is conceived as forward-only, the risks multiply.

The first is state contamination. The Engagement Fabric says interactions should be able to read persisted customer context and update it after each interaction if needed. That is powerful, but it means bad decisions can become bad context for the next decision if the architecture has no mechanism for correction.

The second is cross-system propagation. The orchestration model in The Customering Method is explicitly cross-channel and cross-system. It can trigger service texts, CRM actions, technical tickets, and suppression flows, and push data back into systems of record. A bad decision is not just a bad message. It can become a bad state across multiple systems.

The third is damage to trust through persistence. A wrong recommendation is irritating. A wrong recommendation that follows the customer, contradicts their actual situation, or keeps reappearing because the system cannot correct itself is much worse.

The fourth is AI acceleration without recovery. NIST’s AI Risk Management Framework exists because organizations need ways to manage AI risks and promote trustworthy, responsible development and use of AI systems. Reversibility is what makes that concern architectural rather than merely rhetorical.

Forward intelligence without reversibility is not architecture. It is acceleration.

And that is where the blow-off-top analogy becomes useful. In markets, blow-off tops happen when speed, abundance, and confidence detach from sustainable structure. AI-native Decisioning has the same risk profile: more actions, more optimization, more local wins, more confidence, more system pressure, more latent contradiction, then a sharp correction in the form of trust damage, service fallout, suppression complexity, or remediation cost.

Reversibility is the hedge.

Not because it prevents all errors, but because it limits how far forward-only abundance can run before the system pays for it.

Recovery is an architectural property

This is why reversibility cannot be treated as a bolt-on feature.

It is not just a governance checklist. It is not just model monitoring. It is not just an approvals layer. It is not just a postmortem discipline.

Reversibility is an architectural property.

A reversible Engagement OS would require at least four things:

- Versioned context. If context changes after interactions, it must be attributable, time-aware, and correctable. The architectural material already treats context and experience records as temporal structures rather than static blobs. Reversibility extends that logic.

- Decision lineage. If a decision was made, the system must know what context, rules, priorities, models, and constraints informed it, and what downstream actions it triggered.

- Compensating orchestration. AWS’s saga guidance is a useful analogy: in distributed workflows, compensating transactions are used to preserve integrity when later steps fail or must be unwound. Customer Decisioning is not identical to distributed transaction management, but the lesson is obvious: if decisions create downstream state, the architecture needs compensating flows.

- Re-arbitration. Arbitration logic chooses the best conversation from dynamic context, history, and live behavior. Reversibility extends that across time: when a decision is later invalidated, the system must re-arbitrate from corrected context, not just continue the old path.

That may be the strongest formulation in the whole argument:

Reversibility is arbitration across time.

And it is also the price signal missing from forward-only Decisioning.

If AI drives the marginal cost of next best action toward zero, reversibility is what puts consequence back into the equation.

Go read your vendor again

Now the challenge.

Go back and read your favorite Decisioning vendor. Read the analyst you trust. Read the practitioner telling you AI-native Decisioning is the future of the stack.

Then let me ask you some simple questions:

- Did they tell you what happens when the decision was wrong?

- Did they show you how context is corrected?

- Did they explain how downstream actions are compensated for?

- Did they treat reversibility as a first-class concern?

- Did they say anything serious about the economics of abundance, about what happens when the marginal cost of generating actions collapses but the cost of contradiction, remediation, and trust damage does not?

Across the dominant vendor and analyst narratives, those questions are barely addressed. The emphasis remains on choosing, ranking, optimizing, and scaling. Much less attention is given to correcting, compensating, and sustaining.

That is the gap. Not a technical gap. A strategic one.

The real maturity test

The industry is right to elevate Decisioning from a niche technical function into a more central operating capability. The old world of brittle triggers, disconnected rules, and campaign-centric reaction logic is not enough for the complexity of modern customer systems.

But the industry is wrong when it treats Decisioning as the new master abstraction.

Decisioning is not the architecture. It never was. And the economics of AI-powered abundance make that even clearer.

At the edge, Decisioning is deflationary. It makes Next Best Action cheap.

At the core, it is inflationary. It increases system burden, contradiction risk, and recovery cost.

That is why reversibility matters so much.

A mature system is not one that only knows how to act. It is one that knows how to recover. It can detect bad state, correct context, compensate for downstream effects, re-arbitrate the customer’s situation, and absorb the consequences of speed and abundance without collapsing into contradiction.

That is the real test.

Not whether the system can choose the next best action. Whether it can survive the wrong one.

References

Adobe Experience League. “Decisioning overview.”

https://experienceleague.adobe.com/en/docs/journey-optimizer/using/decisioning/experience-decisioning/experience-decisioning-landing-page

Adobe Experience League. “Decision management/offer decisioning.”

https://experienceleague.adobe.com/en/docs/journey-optimizer/using/decisioning/offer-decisioning/offer-decisioning-landing-page

Pega Documentation. “Understanding the Next-Best-Action Designer strategy framework.”

https://docs.pega.com/bundle/customer-decision-hub-242/page/customer-decision-hub/cdh-portal/nba-strategy-overview.html

CDP Institute / Hightouch. “The next wave of MarTech: AI Decisioning.”

https://www.cdpinstitute.org/hightouch/the-next-wave-of-martech-ai-decisioning/

McKinsey. “Agents for growth: Turning AI promise into impact.”

https://www.mckinsey.com/capabilities/growth-marketing-and-sales/our-insights/agents-for-growth-turning-ai-promise-into-impact

NIST. “Artificial Intelligence Risk Management Framework (AI RMF 1.0).”

https://www.nist.gov/publications/artificial-intelligence-risk-management-framework-ai-rmf-10

AWS Prescriptive Guidance. “Saga orchestration pattern.”

https://docs.aws.amazon.com/prescriptive-guidance/latest/cloud-design-patterns/saga-orchestration.html

Aarron Spinley. The Customering Method.

https://www.fieldbell.co/resources/our-core-text

Enterprise MarTech. The Engagement Fabric White Paper.

https://enterprisemartech.com/papers/the-engagement-fabric

Enterprise MarTech. CX-Led Architecture White Paper.

https://enterprisemartech.com/e-books/cx-led-architecture-framework

Enterprise MarTech. Martech Maturity Reference Framework.

https://enterprisemartech.com/templates/martech-maturity-reference-framework

Join the next course and start learning

September 7th, 2026

.png)